accesses since October 1, 2005

accesses since October 1, 2005 copyright notice

copyright notice

accesses since October 1, 2005

accesses since October 1, 2005

Hal Berghel, PhD, Director

Center for Cybersecurity Research

Identity Theft and Financial Fraud Research and Operations Center

Abstract: Humans have recognized for several millennia that the complexity of their lives is a function of time. When we reminisce about the "good old days," we refer the simplicity and tranquility. When we look forward we have always do so with trepidation. 9/11, cyber-terrorism, and the December, 2004 Indian Ocean tsunami are all reminders that our fears are not without warrant.

In this paper we'll look forward to another impending disaster that I've labeled the Digital Tsunami. If we're not careful, the forthcoming digital tsunami may be far more threatening than its recent veridical counterpart

Tired Metaphors

It was fashionable a few years' back to use "Digital Pearl Harbor" as a generic label for the forthcoming disasters in cyberspace. The metaphor didn't stand the test of time because the metaphor was inappropriate. The real Pearl Harbor was a state-sponsored attack against a military complex for the purpose of eliminating a real or perceived threat of interference of the Japanese Greater Co-Prosperity Sphere. Future digital attacks are more likely to be rogue and launched by individuals rather than state-sponsored, will be directed against the very fabric of our computer and telecommunications infrastructure, will primarily target civilians and their economy, and will not be pre-emptive. In the future, our greatest threat will be from small groups of hackers and cyber-terrorists that have personal agendas. Further, it won't appear to the networked world as an isolated event. It will affect a good part of the world. Hence, the title of this paper.

Hacking is another tired metaphor. In the mid-20 th century, hacking lacked the pejorative overtone it has today. One referred to a programmer as "hacking out code" while writing sub-optimal programs under pressure to meet a deadline. In those days, hacking did not connote defective or malicious. It just wasn't as pretty or well-documented as it would have been given more time. It was only with the advent of the microcomputers in the 1970's that hacking came to signify illegal or unethical activity. I suspect that this was due to the widespread availability and lack of centralized control that characterized this era. Once thousands of programmers discovered that they could dig into the operating systems of millions of computers a certain percentage of the wayward among them decided that this was the appropriate venue for mischief. Mischief-for-glory, electronic crime, digital maliciousness and revenge, all became common primarily because of two factors: convenience and anonymity. Modern hackers form a relatively diffuse group, but seem to be linked together in their desire to avoid both a structured work setting and detection.

While hacking is at best imprecisely defined, general agreement can be obtained on a definition of hacking that includes (a) the creation, distribution, and deployment of malicious software (aka malware), (b) the abuse of computer, network, or system privileges, and (c) the disregard for any rights or expectations to privacy of the victim. We illustrate one type of abuse, (Distributed) Denial-of-Service, below:

As one may see, the act of denying service may itself be further sub-categorized according to the nature and locale of the exploit. Such is the case of hacking generally. The utilities involved in many cases share the functionality and power of commercial-grade software. (a) and (c) are also amenable to further reduction.

Everybody Gets Hacked

The plain truth is that we're all in it together. A hack that can bring down a military computer may similarly affect my IMAP mailer. Security settings notwithstanding, a Windows XP, SP2 hack does as much damage to your Windows computer as mine. A targeted hack is the selective deployment of an attack vector for political, anti-social, or personal reasons. Technologically, a hack is a hack.

A partial list of organizations that have fallen victim to a hack appears below:

|

|

This is a formidable list. What is even more impressive is that the *.orgs operate as security companies. If they can get hacked, what chance do the rest of us have?

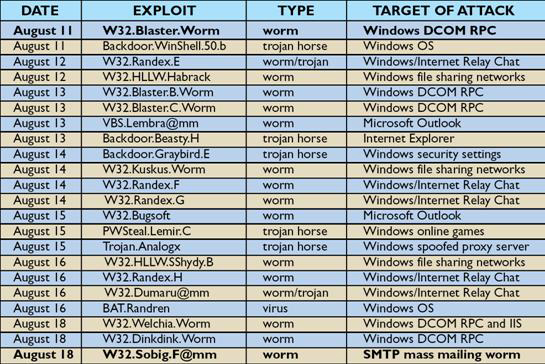

To provide some estimate of the "TCH" (total cost of a hack), consider the following list of exploits during one week of August, 2003.

Figure 1: the third week of Malware Month of the Millenium. Source: [4]

To place this in an economic perspective, Mi2g projected the combined losses resulting from the W32.Blaster and W32.Sobig worms to exceed $50 billion. [Mi2g] It should be re-emphasized that this loss resulted from two exploits during a very short time span. When one considers all exploits over a decade, the losses are well into the hundreds of billions of dollars.

The previous discussion applies to the product of hacking - primarily malware. However, within the past few years we've seen the art of hacking extended beyond malware to include Internet-based hacking. The difference is that malware uses the Internet as a distribution channel, where the latter actually uses the Internet as a means of attack. Examples of Internet-based hacking include:

While a detailed review of perception management and social engineering is beyond the scope of this paper, many of these topics are covered in articles and columns on my Web site [2]. I'll leave this topic with the observation that if any of you have ever asked how spam can come from yourself to yourself, you're aware of the problems of Internet-based hacking.

The Tools of the Trade

At this writing the arsenal of tools available to the hacker is staggering. And these are not unpolished utilities created by neophytes. Some of the modern hacking tools are more impressive than some commercial-grade software. Here are a few examples that you can Google over:

And the list goes on and on.

The list below provides some insight into a basic hacking strategy. Tools, programs, and Internet resources that are used for the specific tactics appear in parentheses.

A more detailed overview of hacking strategies and tools may be found in [5].

The Storm Cloud

The discussion above traces computer and network vulnerabilities from its stone age (1980) to the golden age (2005). The attacks and vulnerabilities that we've covered are, even collectively, in and of themselves insufficient to produce our digital tsunami. All of the hacker tools and techniques to the present are inadequate to the test. At worst we suffer through an occasional digital tornado and hurricane (e.g., Code Red and W32_Blaster. The scariest part is yet to come.

In order to produce a tsunami we have to add the element of surprise. To illustrate, in the case of both Blaster and Sobig, patches were available from the Microsoft download site at least thirty days before the exploits were released. What should have been a non-event became a $50 billion problem. If the patches weren't available, the costs would have been many times that. In the case of Code Red [1], the propagation of the virus was slow enough that preventative measures rendered it harmless. Even the recent self-replicating malware (aka worms) with now-popular names like Ramen, Nimda, Klez, Slapper, Slammer, and the like have yet to create cyber-chaos. In all cases, the virulence was diminished by the lack of surprise.

My analysis of the art of cyber-surprise suggests that the surprise element of existing malware have been thwarted by four factors. First, malware has so far attacked the Internet infrastructure piecemeal. Malware can attack it by Operating System, by function (server or workstation), by the nature of the appliance (computer, router, firewall, gateway), by Internet region, or by level (network layer, link layer, application layer). In each case, the unaffected programs and appliances keep the Internet alive until help arrives in the way of Internet Forensics.

Second, the nature of the vulnerability has historically been single-purpose. Except for the latest incarnations of Nimda and Ramen, I don't know of any attacks that weren't single-purpose... a buffer-overflow here, SQL injection there. Generally, we're dealing with one narrow threat at a time. There is an analogy between narrow-spectrum network vulnerabilities and immunology. Macrophages, lymphocytes, antibodies, antigen receptors, antigen-presenting cells and the like each ameliorate the effect of a particular pathogen or virus, just as firewalls, intrusion detection systems, bastion hosts, anti-virus, anti-spyware and anti-spam software ameliorate the effects of phishing, spam, Trojan horses, worms and the like. AIDS has shown that the most devastating pandemics are those that attack the entire immune system as a whole. Hold that thought.

Third, the spread of a vulnerability tends to be exponential with time - our model is based on our experience with viruses and worms. The section on reconnaissance above illustrates that the hacker's attack is usually sequential. The basic sequence would be to find computers, discover security weaknesses, create tools to exploit weaknesses, try an experimental attack, launch attack, and cover tracks, in that order. This all takes time, and to paraphrase Blaise Pascal, time favors the prepared mind. Take away advance warning, and the surprise element is within our range.

Fourth, thus far malware has been definable by means of identifying signatures. Your favorite anti-virus software works by comparing digital information on your computer and network with these signatures. A profile is built for each piece of malware. This profile might include ports affected, Internet protocols used, geographical distribution, code fragments, and so forth. Think of it as a business card for malware. For the uninitiated, a casual glance on how this works is worth the effort - see, e.g., www.symantec.com in this regard.

So we have four defenses that have saved our bacon thus far. Previous attacks have targeted pieces of the Internet, individual vulnerabilities, have been relatively slow spreading, and betray a pattern or signature that may be easily detected. What would produce the digital tsunami that I predicted? That's easy enough to answer: an exploit that attacks the entire Internet with multiple vulnerabilities, and that will propagate instantaneously in disguise. This part I know. What I'm not sure about is who will create this tsunami, and what they will name it.

Conclusions

I traced the evolution of hacking from its earliest days to the present. I concluded that the future digital tsunami will simply overcome the deficiencies in the previous exploits, viz: piecemeal, narrow, slow, and will preserve its anonymity.

In fact work is already underway in the hacking community - so far along, I might add, that their terminology is already creeping into the lexicon. The malware that will attack all layers and appliances of the Internet are called "multi-platform." Those that are broad spectrum are called "multi-exploit" (a recent version of Ramen had three different exploits while Nimda had twelve). Those that spread near-instantaneously are called "Zero-Day" (incidentally, the technical definition of a Zero-day exploit is one in which the first appearance coincides with the first attack, so that there's no advance warning). Malware that camouflages itself as it propagates is called "polymorphic" or "metamorphic." Those with a technical programming background will profit from Ed Skoudis' and Lenny Zeltser's new book on this subject [7].

So, the digital tsunami is before us. The only question is when.

On that happy note, let me again thank the organizers for inviting me to give the keynote address at IATUL 2005.

REFERENCES

[1] Berghel, H., "The Code Red Worm" Communications of the ACM, 44:11(2001), pp. 15-19.

[2] www.berghel.net. In particular, see the sections under publications and columns.

[3] Berghel, H. and J. Uecker, "WiFi Attack Vectors," Communications of the ACM, 48:8, pp 21-27 (2005).

[4] Berghel, H., "Malware Month of the Millenium," Communications of the ACM, 46:12 (2003), pp. 15-19

[5] Harrison , J. and H. Berghel, "A Protocol Layer Survey of Network Security," in M. Zelkowitz (ed.): Advances in Computers v. 68, Elsevier, Amsterdam , pp. 109-158.

[ 6 ] Mi2g.com

[7] Skoudis, Ed, and L. Zeltser, Malware: Fighting Malicious Codes, Prentice-Hall, Upper Saddle River , 2004.